ExYPro : Process Mining Methodology

Discover with ExYPro how to discover and analyze your business processes

Discover Plotly

In this short tuto, we will discover the Plotly data visualization library and we’ll pratice…

YOLO (Part 4) Reduce detected classes

In this article we will see a trick that reduces the scope of object detection…

YOLO (Part 3) Non Maxima Suppression (NMS)

In my previous articles on YOLO we saw how to use this network … but…

YOLO (Part 2) Object detection with YOLO & OpenCV

In this article we will see step by step how to use the YOLO neural…

Will “Citizen development” suffer the same destiny as the hybrid engine?

Who has never heard about “Citizen development”? in this short post I am trying to…

YOLO (Part 1) Introduction with Darknet

We will see in this article, how with the YOLO neural network we can very…

Introduction to nlpcloud.io

NLPCloud.io is an API for easily using NLP in production. The API is based on…

Discover Gradio: a simple web UI for your Models

In this tutorial, I invite you to discover a small open source framework that is…

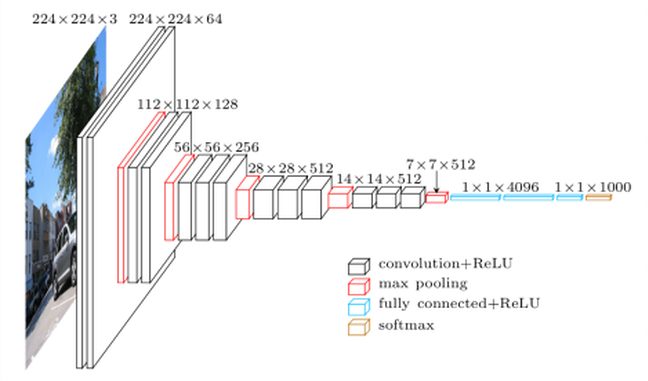

Transfer Learning with VGG

In this article we will discuss the concept of Transfer Learning … or how to…

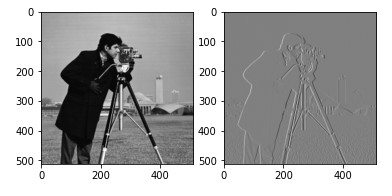

Image Processing

- Part 1: The digital representation

- Part 2: The histogram

- Part 3: Thresholding

- Part 4: Transformations

- Part 5: Morphologic transformations

- Part 6: Filters & convolution

- Part 7: CNN

YOLO Object detection

- Part 1: Introduction to YOLO with Darknet

- Part 2: YOLO with OpenCV

- Part 3: YOLO & Non Maxima Suppression (NMS)

- Part 4: Reduce detected classes