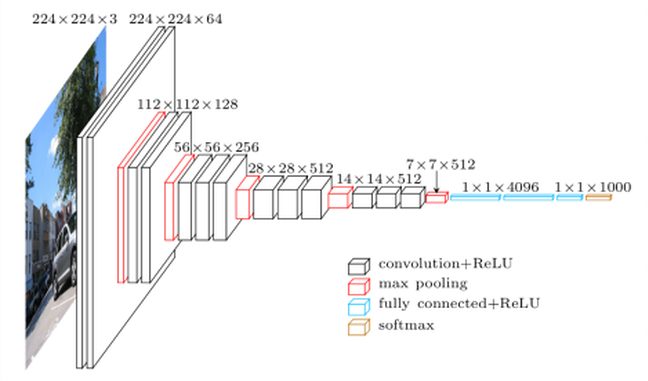

Transfer Learning with VGG

In this article we will discuss the concept of Transfer Learning … or how to avoid redoing long and consuming learning by partially reusing a pre-trained neural network. To do this we will use a network which is the reference in the matter: VGG-Net (vgg16).